In our previous blog we covered some fundamentals of FR, let’s now take a look at how it’s being delivered today and some exciting technologies which surround it.

Fixed Foveated Rendering (FFR)

FFR is a method of applying static foveation to the display periphery (the lens distortion region in particular), resulting in rendering computing load reduction to such areas that aren’t clearly visible in the headset without looking sideways (unnaturally).

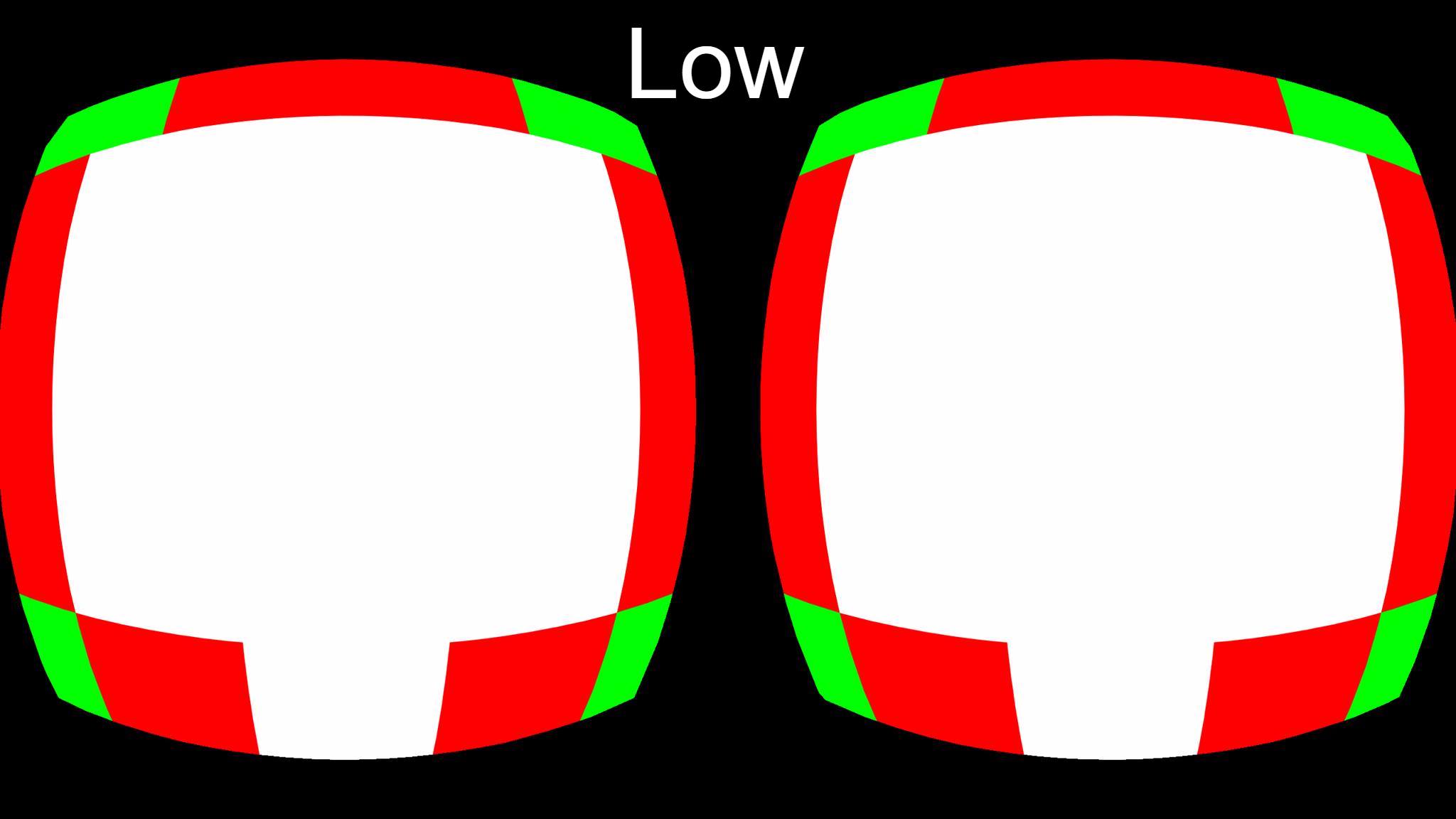

This can be delivered at several levels of severity based on the performance improvements the user/developer seeks to gain from low to high (reduction in detail, boost in performance). The downside with fixed foveation as you can imagine is that the effect can be obvious to the viewer.

Dynamic Fixed Foveated Rendering (DFFR)

A bit of a mouthful yes, but Dynamic FFR makes a lot of sense over standard FFR; it’s an adaptive approach to FFR wherein the levels of foveation adjust to suit system load. I.e. When it is most needed. This means that not only is DFFR less obvious to the viewer, but it can be applied in a more intelligent and efficient manner.

For example, when there isn’t much going on in the virtual scene, chances are that applying foveation won’t provide a great deal of performance benefits. Compare this to a graphically busy scene, we can expect to achieve a greater performance increase relative to a quiet/less intense scene and therefore it makes sense to apply it. Furthermore, the foveation will be less apparent to the viewer particularly in a busy scene anyway.

Dynamic Foveated Rendering (Eye-Tracked)

Not to be confused with the above, from a VR purist’s perspective you might consider Dynamic FR as the true application of foveated rendering. This involves the use of eye tracking hardware and software to detect movement (or “gaze detection”), in order to inform the render pipeline where the ‘point of focus’ is located. A noteworthy feature of eye-tracking is that it can function as a tool for navigation in place of VR controllers.

As you might imagine, in part from our discussion on eye movements in the previous blog, development in this area has been difficult given the nature of the human eye. In particular its ever-changing, rapid movements and form (pupillometry etc.) is complex as it is, not forgetting then also the variations from person to person.

Current State of Affairs

FFR, DFFR and Eye-tracked methods are already in use today. As of recently, the Vive Pro Eye (terrible name I know!) has been made available to consumers which features integral eye-tracking for FR. The upcoming HP Reverb G2 Omnicept is another upcoming device supporting eye-tracking and foveated rendering.

Companies such as Tobii and NVidia have been at the forefront recently pushing hardware and software technologies such as VRSS. Unity and Unreal are also supporting developers with FR: Unreal 4.26 brought support for FFR on the Quest; the latest (at time of writing) version 4.27 brings support for eye-tracked FR with variable rate shading (VRS).

So evidently, this is a technology of today and not just a concept from wishful thinking for tomorrow.

Some Playing Around

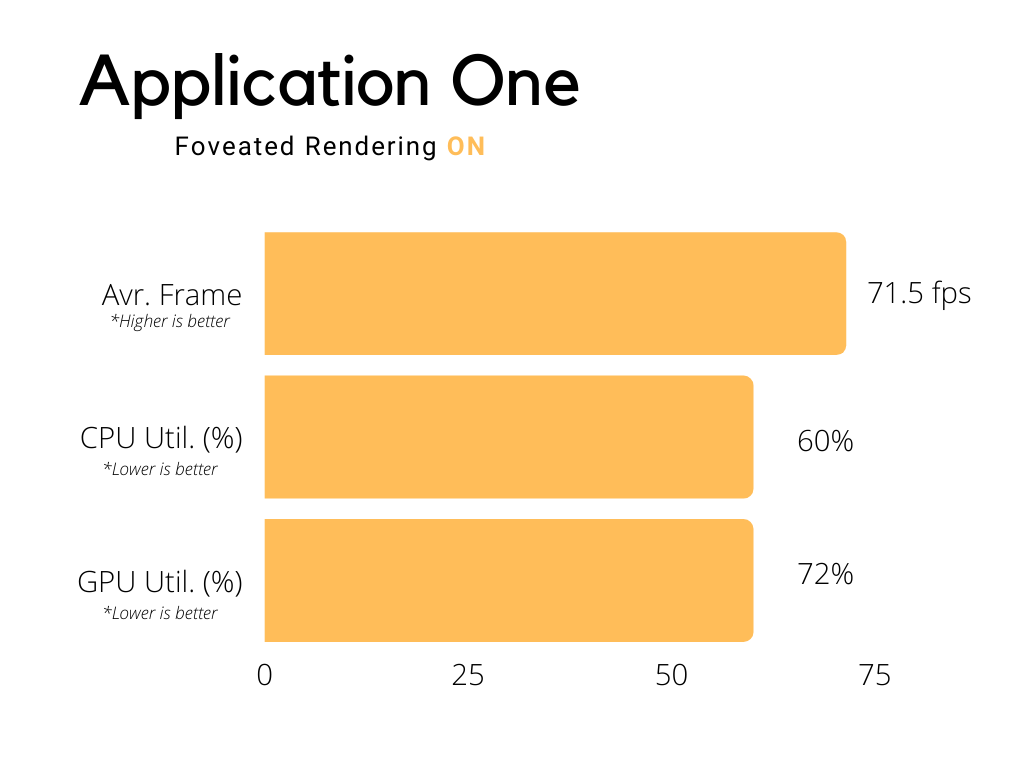

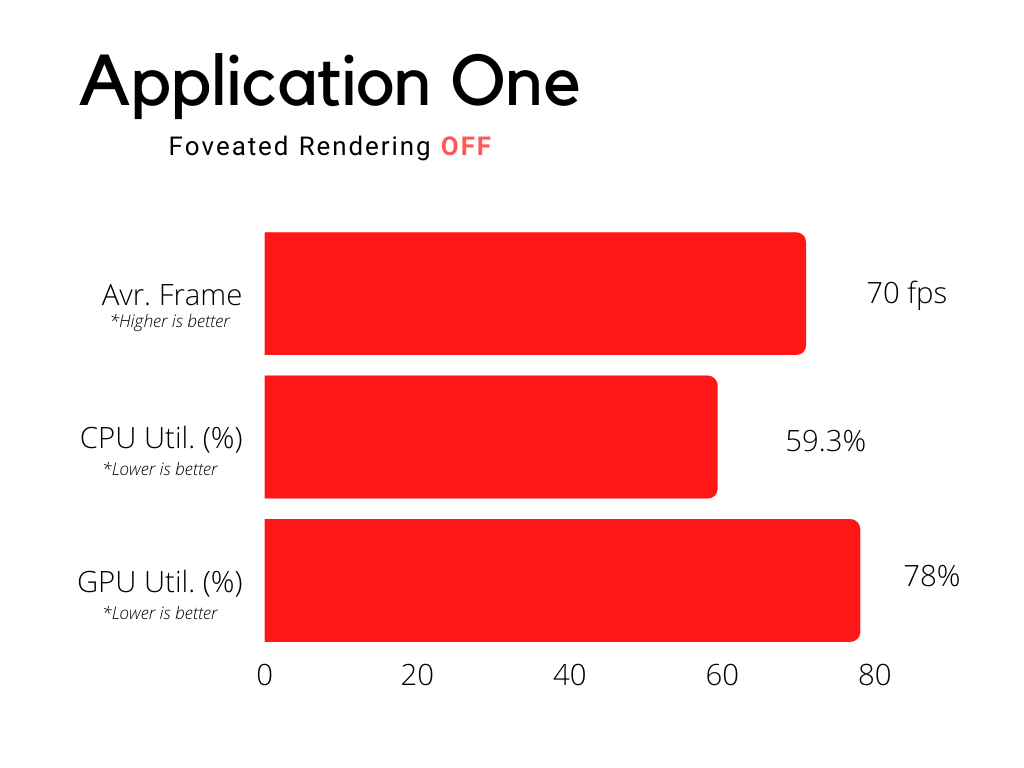

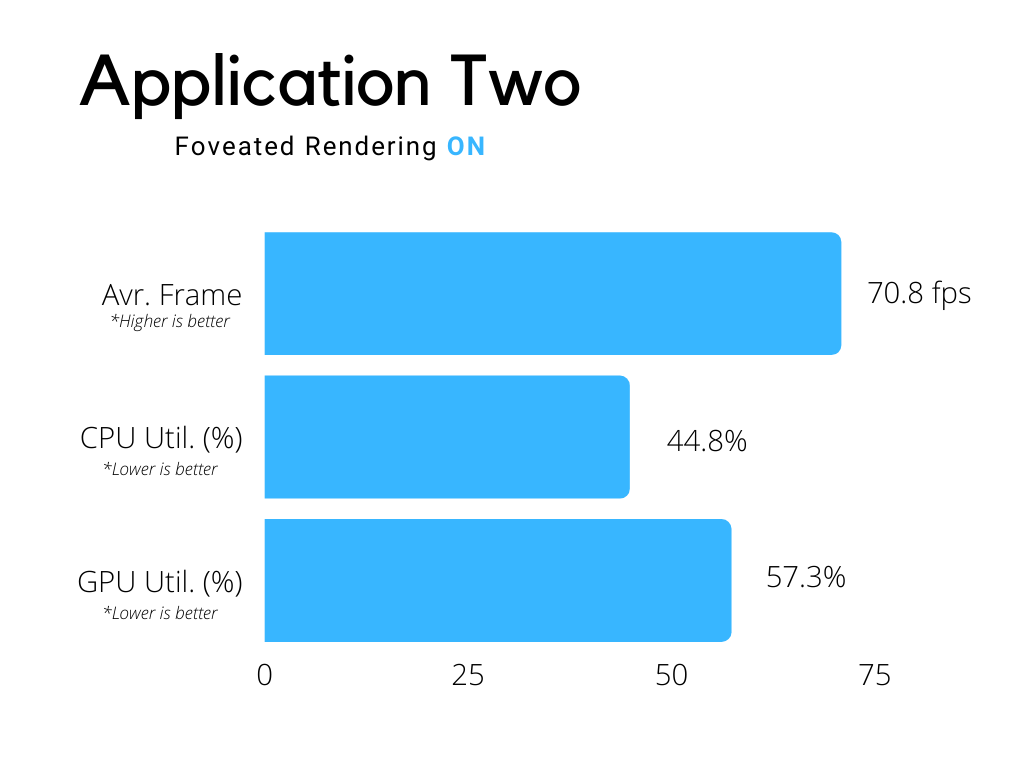

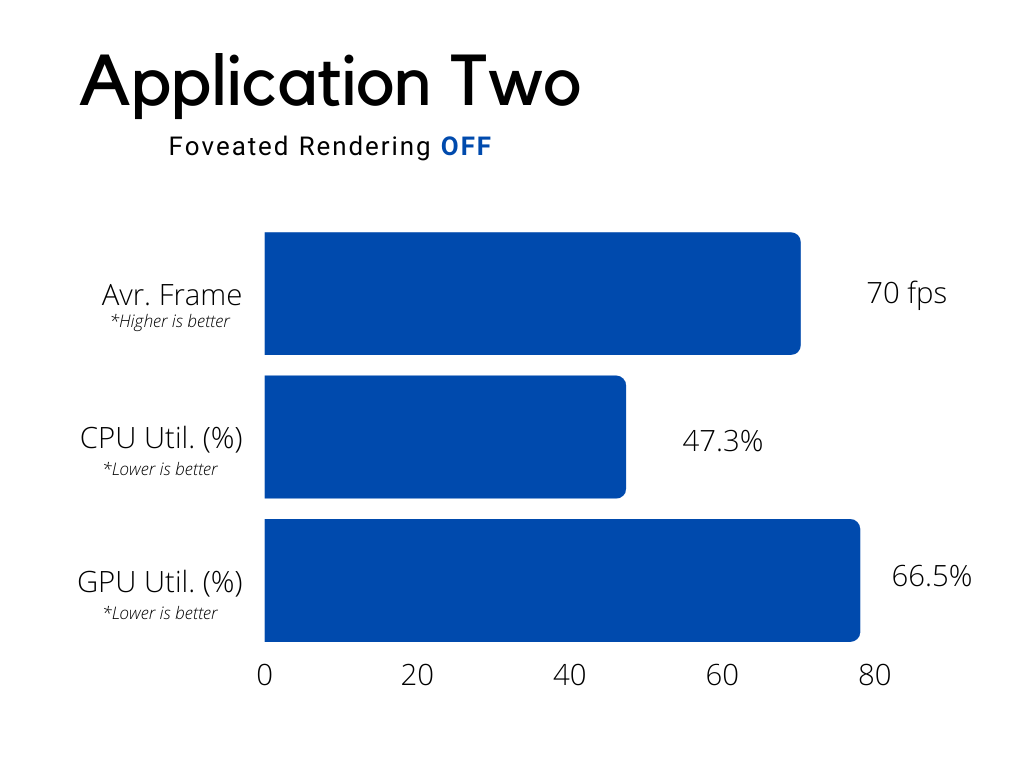

We couldn’t simply just talk about this technology without testing it out for ourselves. Having tested FR on the Oculus Quest (FFR via Sidequest) we tested 2 different applications with FR on and off.

The 3 performance metrics that were observed were average framerate, average CPU utilisation & GPU utilisation: These give us a good idea what’s going on in terms of performance.

As we can see, overall graphics (GPU) utilisation is consistently reduced through applying FR, with little improvement in CPU load (as to be expected). The average frame rate saw very minor improvements, however it’s worth bearing in mind that the applications tested were optimised for mobile VR (Quest) headsets to be ‘lightweight’, as such it can be expected that frame rate would remain stable and/or high given they were developed to do so.

Further testing would involve desktop applications to stress the system more, where such applications rely on the user to set limits based on their hardware configuration which is an unknown compared with a closed system such as the Quest.

Closing Considerations

There is scope for foveal rendering to aid not just in delivery of next generation graphical content, it extends beyond this. Eye-tracked foveal rendering brings with it many other opportunities.

Ethics aside, consider the ability to investigate, analyse & understand human behavior in context of a safe and relatively inexpensive virtual scenario through eye-tracking. This opens a myriad of uses in terms of VR and software development. Training; the approach to and result of design; planning; user experience; user interaction etc. can be evolved through whichever metrics you may be tracking.

Some contextual questions that could be analysed for any given scenario: Where was the viewer looking and for how long? Why? Were they distracted? Were they looking in the right place/made the right choices? Are there indications as to what led them there/made that choice? Is peripheral vision a factor?

The information could be used to better inform and shape our final product, whatever that might be! Then again, perhaps it doesn’t!

What are your thoughts? Game changing technology or a marketing gimmick to shift more headsets? Let us know your thoughts in the comments below!

One thought on “How One Rendering Technique Is Improving VR Performance (Part 2)”